The W3C standard constraint language for RDF: SHACL

A brief history of the new standard and some toys to play with it.

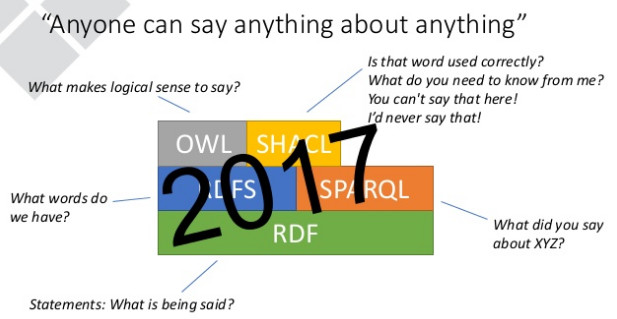

Many people have complained about how the Web Ontology Language, or OWL, wasn’t a very good constraint language for RDF data. They didn’t realize that it wasn’t designed to be a constraint language, in which you define the structure of a dataset as a guide to applications so that these applications know what to expect. OWL was designed to do other things, and we finally have the W3C standard RDF constraint language we’ve been waiting for, but before we discuss it, a little history puts it in better context.

For nearly all computer applications ever, there has been some ability to define what should be in the data, such as the columns of a relational table and their data types, the elements and attributes of a set of XML documents, or the classes of data that an object-oriented program is working with and their attributes. Data was usually not even added to these data sets until it conformed to the descriptions. These data definitions are known as prescriptive schemas, but OWL’s goal was to provide descriptive schemas: metadata about existing data sets, typically from the web, so that you could infer new knowledge about the resources that you found. (When I mention OWL, assume that I’m including its base layer RDFS as well.)

When building large RDF applications, though, prescriptive schemas can provide some benefits, and while OWL can do a bit of this, it can’t do it very well. And, the OWL tools that can check whether constraints have been violated are fairly big and heavy because of all of their additional inferencing capabilities. So people complained. (For a good overview of the cool things that OWL is currently being used for, see Jim Hendler’s 2016 ESWC talk.)

At my former employer TopQuadrant, principal engineer Holger Knublauch developed a triples-based constraint language for RDF called SPIN, for “SPARQL Inferencing Notation.” It took advantage of SPARQL’s ability to define constraints–basically, you would query for things you didn’t want to see in the data, like an invoice instance with no approvedBy value, and if you found any, you knew where the constraint was violated. SPIN provided a structure for storing these queries as metadata about a dataset, and it was very useful in TopQuadrant’s customer work. I wrote about it here in 2009 and in 2010.

It was so useful that eventually some of these customers, as well as TopQuadrant and some TopQuadrant colleagues at other companies, started a W3C working group to develop a new constraint language that built on the ideas of SPIN: the Shapes Constraint Language, or SHACL. (Get it? “Shackle”? Constraints?) SHACL is now a Recommendation: an official W3C standard just like HTML, XML, CSS, and the RDF standards.

The TopQuadrant page An Overview of SHACL Features and Specifications gives a nice overview of the components of SHACL and their relationships, and I will be digging deeper into that in the coming weeks.

Some more great recent SHACL news is the availability of an API with command line tools to try SHACL out. I’ve been playing with the shaclvalidate.sh shell script tool (a Windows batch file is also included) which reads a file of triples that include constraints and instance data and then lists any violated constraints. A form-based SHACL playground is also available; I took the sample constraints and the Turtle version of the sample data available on that page, combined them into a single file, and fed that file to shaclvalidate.sh. The validation report that it created pointed out that the schema:Person instance’s death date was earlier than its birth date, thereby violating one of the defined constraints. These reports are themselves sets of triples, making this kind of validation easier to plug this validation process into a larger workflow.

The open source SHACL API that Holger created is available on github. The week that he released the command line tools I was actually trying to code up a SHACL command line validator myself around the API (with much kind help to my atrophied Java skills from Andy Seaborne), so I was very glad to see Holger release something that saved me from further Java coding.

Holger’s API includes many test cases that I know will teach me a lot about SHACL’s capabilities. For example, one test demonstrates the ability to define a constraint with a SPARQL query, one of the original inspirations for this constraint language, and I have already successfully run this test with the validation shell script. SPARQL-based constraints are less necessary in SHACL than you might think, because the core of SHACL is a vocabulary to define many common constraint conditions, but it’s still great to see them, because they add so much flexibility to the constraints that you can define–for example, you could specify that an approvedBy value is only required if invoiceAmount is greater than a certain value.

I’m looking forward to playing more with SHACL. A good next step for anyone interested is to review the slides titled Shaping the Big Ball of Data Mud: W3C’s Shapes Constraint Language (SHACL) that TopQuadrant’s Richard Cyganiak gave to the Lotico Berlin Semantic Web meetup last November. I’ve copied his excellent conclusion slide above.

Share this post